Cloud storage is convenient, but it can get expensive fast. I wanted a simple, reliable, and low-cost backup solution for my projects without getting locked into Google Drive or Dropbox pricing.

That’s where Hetzner Storage Boxes come in: a generous amount of storage, WebDAV support out of the box, and a price that’s hard to beat.

In this article, I’ll show you how I set up my own “personal backup drive” using Hetzner Storage Boxes, with a focus on one-way backups (local → remote only). This way, I always have an up-to-date backup of my important folders without worrying about accidental remote deletions syncing back to my laptop.

Why Hetzner Storage Boxes?

When I started looking for a backup solution, cost and flexibility were the two main factors. Hetzner Storage Boxes checked both boxes. They start at just a few euros per month and provide a generous amount of space, making them one of the most affordable options for long-term storage.

Some of the highlights include:

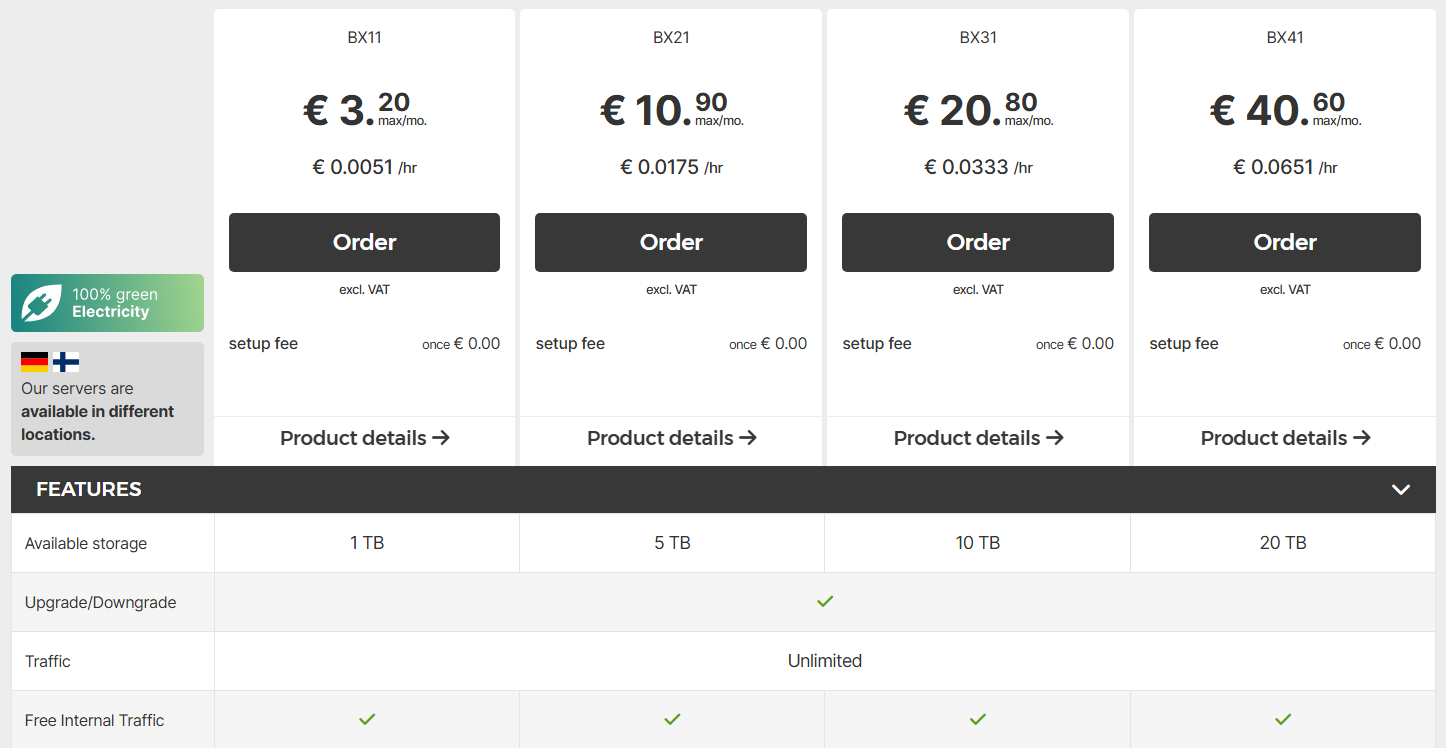

- Ample storage capacity – plans start at 1 TB and scale up as needed.

- Multiple access protocols – WebDAV, FTP, SFTP, and rsync are all supported out of the box.

- Built-in backup features – snapshots and backup intervals can be configured to add extra safety.

- Cost efficiency – far cheaper than popular services like Google Drive, Dropbox, or OneDrive for the same capacity.

Below is a price comparison for individual plans, as of mid-2025:

| Provider / Plan | Storage Offered | Price (Monthly) |

|---|---|---|

| Hetzner Storage Box (BX11) | 1 TB | €3.20 |

| Hetzner Storage Box (BX21) | 5 TB | €10.90 |

| Google One – 100 GB | 100 GB | €1.99 |

| Google One – 2 TB | 2 TB | €9.99 |

| Dropbox Plus – 2 TB | 2 TB | €9.99 |

| OneDrive Personal – 1 TB | 1 TB | €9.20 |

What This Means for Your Wallet

- Ridiculously low entry cost – Hetzner gives you 1 TB for just over €3/month, versus €9–10 from Google, Dropbox, or Microsoft for similar or even less storage. That’s roughly one-third of the cost for comparable space.

- No gimmicks – Hetzner includes unlimited data transfer, optional snapshot features, and support for protocols like WebDAV, FTP, SFTP, and rsync.

- Budget scaling – Paying just a few euros monthly makes it perfect for low-cost backups, especially if your goal is simplicity and affordability.

Because WebDAV is natively supported, it integrates seamlessly with Python. That means I can create a simple, lightweight sync solution, like the one in this guide, without relying on heavy third-party tools or vendor lock-in.

Hetzner Storage Box Configuration

You can sign up for Hetzner here.

Hetzner offers several Storage Box plans, depending on your needs:

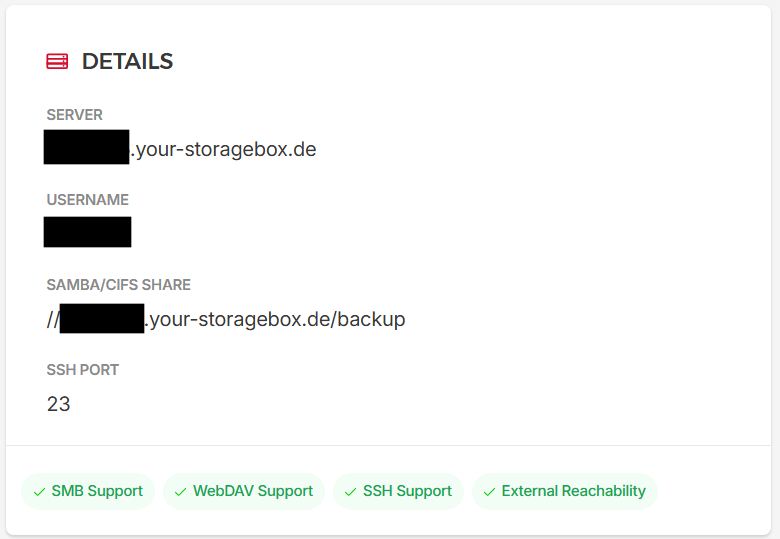

Once you’ve got your Storage Box, you’ll need a few details for the scripts in this guide to work:

- Username (normally looks like

uXXXXXX) - Password for your main account (note: this script doesn’t support sub-accounts)

- WebDAV access and External Reachability enabled

- Storage Box URL (see Hetzner’s WebDAV docs)

Here’s an example configuration:

And if you’d like to go beyond just Storage Boxes, Hetzner also offers cloud VPS at a budget-friendly price. I’ve put together a PDF guide that shows you how to turn one into your own Platform-as-a-Service (PaaS). A great next step if you want to self-host projects, apps, or services, all without breaking the bank.

First Attempt: File Monitoring Only

My first approach was the simplest one: just watch my local folders for changes and push any new or modified files to my Hetzner Storage Box. The idea was to keep my backup updated automatically without having to manually run anything or do a full sync each time.

Here’s how I thought about it:

- Monitor my local folder for new or changed files (including moves and deletes).

- Upload these file changes to the remote storage via WebDAV.

Here’s the initial Python script :

import time

import os

from pathlib import Path

from dotenv import load_dotenv

from watchdog.observers import Observer

from watchdog.events import FileSystemEventHandler

from webdav3.client import Client

LOCAL_DIR = Path("./HetznerDrive").resolve()

REMOTE_DIR = "/HetznerDrive"

# Load environment variables from .env file

load_dotenv()

# --- Helpers -----------------------------------------------------------------

def load_config():

"""Load configuration from environment variables"""

# Convert to webdavclient3 format

options = {

'webdav_hostname': os.getenv('HETZNER_BASE_URL'),

'webdav_login': os.getenv('HETZNER_USERNAME'),

'webdav_password': os.getenv('HETZNER_PASSWORD'),

'webdav_timeout': 30

}

return options

def create_webdav_client(config_options):

"""Create and configure WebDAV client"""

client = Client(config_options)

client.verify = True

return client

# --- Watchdog Local Event Handler --------------------------------------------

class LocalHandler(FileSystemEventHandler):

def __init__(self, client):

self.client = client

def on_modified(self, event):

if not event.is_directory:

try:

self.upload(Path(event.src_path))

except Exception as e:

print(f"Error handling file modification for {event.src_path}: {e}")

def on_moved(self, event):

try:

rel_source = str(Path(event.src_path).relative_to(LOCAL_DIR))

remote_path_source = f"{REMOTE_DIR}/{rel_source}".replace("\\", "/")

rel_dest = str(Path(event.dest_path).relative_to(LOCAL_DIR))

remote_path_dest = f"{REMOTE_DIR}/{rel_dest}".replace("\\", "/")

print(f"Moving {rel_source} to {rel_dest}")

self.client.move(remote_path_source, remote_path_dest)

print(f"Moved {remote_path_source} to {remote_path_dest}")

except Exception as e:

print(f"Error handling file move for {remote_path_source}: {e}")

def on_created(self, event):

if not event.is_directory:

try:

self.upload(Path(event.src_path))

except Exception as e:

print(f"Error handling file creation for {event.src_path}: {e}")

else:

try:

rel = str(Path(event.src_path).relative_to(LOCAL_DIR))

remote_path = f"{REMOTE_DIR}/{rel}".replace("\\", "/")

self.client.mkdir(remote_path)

print(f"Created remote directory: {remote_path}")

except Exception as e:

print(f"Error handling directory creation for {event.src_path}: {e}")

def on_deleted(self, event):

if not event.is_directory:

try:

rel = str(Path(event.src_path).relative_to(LOCAL_DIR))

remote_path = f"{REMOTE_DIR}/{rel}".replace("\\", "/")

self.client.clean(remote_path)

print(f"Deleted remote: {rel}")

except Exception as e:

print(f"Error handling file deletion for {event.src_path}: {e}")

def upload(self, path: Path):

try:

rel = str(path.relative_to(LOCAL_DIR)).replace("\\", "/")

remote_path = f"{REMOTE_DIR}/{rel}"

print(f"Uploading {rel} -> {remote_path}")

self.client.upload_sync(remote_path=remote_path, local_path=str(path))

print(f"Successfully uploaded: {rel}")

except Exception as e:

print(f"Error uploading {path}: {e}")

# Don't re-raise - we want to continue monitoring other files

# --- Main Sync Loop ----------------------------------------------------------

def main():

try:

config_options = load_config()

client = create_webdav_client(config_options)

print(f"Local directory: {LOCAL_DIR}")

print(f"Remote directory: {REMOTE_DIR}")

LOCAL_DIR.mkdir(exist_ok=True)

# Ensure remote base directory exists

try:

print(f"Ensuring remote directory exists: {REMOTE_DIR}")

client.mkdir(REMOTE_DIR)

except Exception as e:

print(f"Warning: Could not create remote directory {REMOTE_DIR}: {e}")

print("This might be normal if the directory already exists or if you don't have write permissions")

# Start local watcher

handler = LocalHandler(client)

obs = Observer()

obs.schedule(handler, str(LOCAL_DIR), recursive=True)

obs.start()

print("Starting file sync (Ctrl+C to stop)...")

try:

while True:

time.sleep(30)

except KeyboardInterrupt:

print("\nStopping sync...")

obs.stop()

finally:

obs.join()

print("Sync stopped.")

except Exception as e:

print(f"Fatal error: {e}")

import traceback

traceback.print_exc()

return 1

return 0

if __name__ == "__main__":

main()

I store my WebDAV credentials in a .env file, which the script loads using python-dotenv. This keeps sensitive information separate from the code and easy to update.

The script uses Watchdog to monitor the local folder for changes. Whenever a file is created, modified, deleted, or moved, the corresponding action is applied to the remote folder. This ensures the backup mirrors my local folder accurately.

Files are uploaded using a helper function that preserves relative paths, so the remote folder structure stays the same as my local one. Moves and renames are handled intelligently to avoid duplicates, and deletions remove files from the remote storage to keep it clean.

The main() function sets up the WebDAV client, ensures the local and remote directories exist, and starts the Watchdog observer. A loop runs indefinitely, and I can stop the sync anytime with Ctrl+C.

I’ve actually written a separate article that dives deeper into file system monitoring with Watchdog, covering how it works under the hood and when it’s useful. You can check it out here: Mastering File System Monitoring with Watchdog in Python.

Improved Approach: Add Startup Sync

It was a good first attempt for basic incremental backups, but I realized that if I wanted a clean and reliable remote mirror, I needed to add a startup sync step before entering monitoring mode.

That’s where the improved approach comes in:

This article is for paid members only

To continue reading this article, upgrade your account to get full access.

Subscribe NowAlready have an account? Sign In