Your RAG Pipeline Is Lying to You

You built a RAG pipeline. You tested it manually with a few queries, got reasonable answers, and shipped it. Your stakeholders are happy. The chatbot returns answers that sound authoritative.

But here is the uncomfortable question: do you actually know if it's right?

Not "does it return something", but does it return the correct thing, consistently, across the full distribution of queries your users will actually ask?

If you can't answer that with a number, you don't have a production system. You have a demo with a deployment URL.

This article is for senior engineers who build RAG systems and want to know if they actually work. We will walk through a concrete RAG example - a pipeline over a corporate annual report - and build the testing layer that most teams skip entirely. The code is real and runnable. The failures are not hypothetical.

All code in this article is available in the companion GitHub repository: github.com/nunombispo/rag-pipeline-article. The repository includes a sample golden dataset, the full pytest suite, and a minimal monitoring wrapper.

Why RAG Is Harder Than It Looks

RAG sounds simple on paper: retrieve relevant context, pass it to the model, get a grounded answer. The reality is that there are three independent failure layers, any one of which can silently corrupt your results.

Layer 1 - Retrieval failure: The vector search returns chunks that are topically adjacent to the query but don't contain the actual answer. Similarity is not the same as relevance. A question about Q3 revenue may surface chunks about Q3 strategy, not the income statement.

Layer 2 - Context failure: The right information is in the document, but it was split across a chunk boundary during preprocessing. The number is on page 47, the label is on page 46, and your chunker put them in separate vectors. Neither chunk alone answers the question correctly.

Layer 3 - Generation failure: The model receives good context but still produces a wrong answer - either by hallucinating when the context is ambiguous, by confusing units or time periods, or by confidently synthesizing an answer from two chunks that should not be combined.

Each layer fails independently and silently. There is no exception thrown. The user gets an answer. The answer looks plausible.

Query ──► [Embedding] ──► [Vector Search] ──► [Context Assembly] ──► [LLM] ──► Answer

│ │ │ │

Layer 0 Layer 1 Layer 2 Layer 3

(usually OK) (common fail) (common fail) (occasional fail)

Layer 0 is the embedding model itself: given a query string, does it produce a vector? This step almost never fails in practice — hosted embedding APIs are reliable, and vector dimension mismatches surface immediately at index time. Most teams verify it once and move on.

The layers that actually kill production systems are 1 and 2. They are typically invisible until a user complains - and most users don't complain. They just stop trusting the system.

RAG failures are not crashes. They are confidence-eroding wrong answers delivered at scale. The risk is not a system outage - it is the gradual destruction of user trust in your AI investment.

The Concrete Example: RAG Over an Annual Report

The easiest way to see all three layers fail is on a real system, with a real document, running real queries. Annual reports are the canonical enterprise RAG use case. Dense prose, financial tables, footnotes, forward-looking statements, and regulatory boilerplate - all in one PDF. They are also a perfect failure showcase because the data is precise, verifiable, and publicly available.

We will use Microsoft's 2025 Annual Report (publicly available). It's 83 pages of shareholder letters, financial tables, segment breakdowns, accounting notes, and legal disclosures - exactly the kind of document that gets thrown at enterprise RAG pipelines and produces interesting failures. The principles apply to any dense financial document.

Setup

pip install anthropic pydantic>=2 chromadb pypdf python-dotenv rich pytest

Step 1: Ingest the PDF

# ingest.py

import hashlib

from pypdf import PdfReader

import chromadb

CHUNK_SIZE = 800 # characters

CHUNK_OVERLAP = 100

def load_pdf(path: str) -> list[dict]:

"""Extract text from PDF, page by page."""

reader = PdfReader(path)

pages = []

for i, page in enumerate(reader.pages):

text = page.extract_text() or ""

pages.append({"page": i + 1, "text": text.strip()})

return pages

def chunk_text(pages: list[dict]) -> list[dict]:

"""Naive fixed-size chunking with overlap."""

chunks = []

for page in pages:

text = page["text"]

start = 0

while start < len(text):

end = start + CHUNK_SIZE

chunk = text[start:end]

chunk_id = hashlib.md5(chunk.encode()).hexdigest()

chunks.append({

"id": chunk_id,

"text": chunk,

"page": page["page"],

"start": start,

})

start += CHUNK_SIZE - CHUNK_OVERLAP

return chunks

def build_collection(pdf_path: str, collection_name: str = "annual_report"):

client = chromadb.Client()

collection = client.get_or_create_collection(collection_name)

pages = load_pdf(pdf_path)

chunks = chunk_text(pages)

collection.add(

ids=[c["id"] for c in chunks],

documents=[c["text"] for c in chunks],

metadatas=[{"page": c["page"]} for c in chunks],

)

print(f"Indexed {len(chunks)} chunks from {len(pages)} pages.")

return collection

Step 2: Query the Pipeline

# rag.py

import anthropic

import chromadb

import os

from dotenv import load_dotenv

MODEL = "claude-sonnet-4-6"

TOP_K = 3

load_dotenv()

API_KEY = os.getenv("ANTHROPIC_API_KEY")

client = anthropic.Anthropic(api_key=API_KEY)

def retrieve(collection, query: str, top_k: int = TOP_K) -> list[dict]:

results = collection.query(query_texts=[query], n_results=top_k)

chunks = []

for doc, meta, distance in zip(

results["documents"][0],

results["metadatas"][0],

results["distances"][0],

):

chunks.append({"text": doc, "page": meta["page"], "score": 1 - distance})

return chunks

def answer(query: str, chunks: list[dict]) -> str:

context = "\n\n---\n\n".join(

f"[Page {c['page']}]\n{c['text']}" for c in chunks

)

system = (

"You are a financial analyst assistant. Answer questions based strictly "

"on the provided context. If the answer is not present in the context, "

"say 'I could not find this in the provided document.' "

"Do not speculate or use external knowledge."

)

response = client.messages.create(

model=MODEL,

max_tokens=512,

thinking={"type": "disabled"}, # disable extended thinking; not needed here

system=system,

messages=[

{

"role": "user",

"content": f"Context:\n{context}\n\nQuestion: {query}",

}

],

)

parts = [b.text for b in response.content if getattr(b, "type", None) == "text"]

return "\n".join(parts).strip() if parts else ""

def rag_query(collection, query: str) -> dict:

chunks = retrieve(collection, query)

response = answer(query, chunks)

return {

"query": query,

"chunks": chunks,

"answer": response,

}

Step 3: Run It

# main.py

from ingest import build_collection

from rag import rag_query

collection = build_collection("2025_AnnualReport.pdf") # optional: collection_name="my_docs"

result = rag_query(collection, "What were the main risks discussed?")

print(result["answer"])

# result also has "chunks" (text, page, score) and "query"

This is a typical minimal RAG implementation. Straightforward, readable - and full of silent failure modes. Let's expose them.

What Happens Without Evaluation

Before building the test suite, let's make the failures concrete by running the pipeline against four representative queries. When retrieval and generation align, the pipeline delivers a clean, grounded answer with no intervention needed - Query 1 shows this. But "correct" and "useful" are not the same thing, and the remaining queries show how quietly the gap between them can widen.

Query 1: "What was Microsoft's total revenue in fiscal year 2025?"

Based on the provided context, Microsoft's total revenue in fiscal year 2025 was

**$281,724 million ($281.7 billion)**, representing a **15% increase** compared

to fiscal year 2024's revenue of $245,122 million.

The answer is correct. But the same figure also appears narratively in the shareholder letter on page 2 - "revenue was $281.7 billion, up 15 percent" - without the structured financial context an analyst would expect. If the retriever ranks the letter chunk higher than the financial tables on page 25, the answer is technically right but stripped of the gross margin, operating income, and EPS data that make it meaningful. It passes a keyword check. It fails a usefulness check.

Query 2: "How many employees does Microsoft have?"

I could not find this in the provided document. The provided context does not

contain any information about Microsoft's employee headcount.

The answer - 228,000 full-time employees as of June 30, 2025 - is in the Human Capital Resources section on page 16. But the section spans pages 15–17, and naive fixed-size chunking splits it mid-paragraph. Depending on where the chunk boundary falls, the retriever may rank pages 15 or 17 higher than page 16, returning context about compensation programs or operations centers rather than the headcount figure. The model refuses. The refusal looks correct. The underlying cause is a retrieval failure.

A less disciplined model - or the same model without an explicit "do not speculate" instruction in the system prompt - may hallucinate a figure by latching onto other numeric patterns in the retrieved chunks. The correct behavior is not guaranteed; it is a product of your system prompt design, and without a test asserting it, a prompt change can silently remove it.

Query 3: "What percentage of revenue came from cloud services?"

Based on the provided context, I can identify the following cloud-related revenue

items for fiscal year 2025:

| Product/Service | Revenue (in millions) |

|----------------------------------------------------|-----------------------|

| Server products and cloud services | $98,435 |

| Microsoft 365 Commercial products and cloud services| $87,767 |

| Microsoft 365 Consumer products and cloud services | $7,404 |

**However, I cannot provide a precise "cloud-only" percentage** for the following

reasons:

1. Several line items **bundle** cloud services with non-cloud products (e.g.,

"Server products **and** cloud services"), making it impossible to isolate

pure cloud revenue from the data provided.

2. Total revenue is stated as **$281,724 million** for FY2025.

If the bundled categories above are used as a rough proxy, those three lines sum

to approximately **$193,606 million**, representing roughly **~69%** of total

revenue — but this would **overstate** true cloud-only revenue since non-cloud

products are included in those figures.

The retriever surfaces chunks that bundle cloud and non-cloud revenue under the same line items. The model correctly flags the ambiguity, but still calculates a ~69% figure using the bundled totals as a proxy. The answer looks rigorous: it has a table, a caveat, and a percentage. It is also wrong in a way that is easy to miss. A reader who skims the headline number walks away with a figure that overstates cloud revenue by an unknown margin. Transparency about uncertainty is not the same as a correct answer.

Query 4: "Did Microsoft mention any regulatory risks related to AI?"

Based on the provided context, **no specific mention of regulatory risks related

to AI** is made in the excerpts provided. The pages included focus primarily on:

- Microsoft's mission and AI platform strategy (Page 22)

- The company's positioning in the AI platform shift (Page 2)

- The Microsoft Elevate initiative and AI skills investments (Page 4)

**I could not find this in the provided document.** To get a comprehensive view

of Microsoft's AI-related regulatory risks, you would likely need to refer to the

**Risk Factors section** of their Annual Report on Form 10-K, which is not

included in the provided context.

The model correctly declines - and it is correct to do so. The actual regulatory risk language lives in the 10-K filing, a separate document not in the pipeline. The refusal is technically right. But the user doesn't know that. From their perspective, they asked a reasonable question and got a dead end. The real failure is architectural: the pipeline has no mechanism to tell the user why it can't answer - whether the information doesn't exist, exists in the document but wasn't retrieved, or exists in a document that was never ingested. All three scenarios return the same response. Without instrumentation, you cannot distinguish between them.

Without a ground truth dataset and a structured test harness, you have no way to know which of these answers are right - or whether a change to your chunking strategy or system prompt just made things worse.

The Testing Layer

Good RAG evaluation has three stages. Each catches a different class of failure.

Stage 1: Chunk Quality

Before any query is run, validate that your chunking strategy produces coherent, complete units of information.

# tests/test_chunks.py

import pytest

from ingest import load_pdf, chunk_text

PDF_PATH = "2025_AnnualReport.pdf"

@pytest.fixture(scope="module")

def chunks():

pages = load_pdf(PDF_PATH)

return chunk_text(pages)

def test_no_empty_chunks(chunks):

empty = [c for c in chunks if not c["text"].strip()]

assert len(empty) == 0, f"{len(empty)} empty chunks found"

def test_chunk_size_bounds(chunks):

oversized = [c for c in chunks if len(c["text"]) > 1000]

assert len(oversized) == 0, f"{len(oversized)} chunks exceed 1000 chars"

def test_no_orphaned_numbers(chunks):

"""Flag chunks that are pure numeric tables with no prose context.

These are extraction artifacts from PDF tables and answer nothing reliably."""

import re

suspicious = []

for c in chunks:

words = re.findall(r"[a-zA-Z]{3,}", c["text"])

if len(words) < 5 and len(c["text"]) > 100:

suspicious.append(c)

assert len(suspicious) < 10, (

f"{len(suspicious)} chunks look like structureless table extracts. "

"Consider a table-aware PDF parser."

)

def test_chunk_overlap_preserves_context(chunks):

"""Verify that consecutive chunks share some overlapping text."""

# Sample the first 50 chunk pairs

misses = 0

for i in range(min(50, len(chunks) - 1)):

a = chunks[i]["text"]

b = chunks[i + 1]["text"]

# Overlap window: last N chars of a should appear in start of b

overlap_window = a[-100:]

if overlap_window not in b:

misses += 1

# Allow some misses (page boundaries)

assert misses < 15, f"Too many chunk pairs with no detectable overlap ({misses})"

These tests catch configuration mistakes before they touch the vector store.

Stage 2: Retrieval Evaluation

This is the most important stage and the most commonly skipped. Build a golden dataset: a small set of (query, expected page, expected content) pairs where you know where the answer lives.

# tests/golden_dataset.py

GOLDEN_QA = [

{

"query": "What was Microsoft's total revenue in fiscal year 2025?",

"expected_page": 25, # Summary Results of Operations table

"expected_answer_contains": ["281", "billion"],

},

{

"query": "How many employees does Microsoft have?",

"expected_page": 16, # Human Capital Resources section

"expected_answer_contains": ["228,000", "employees"],

},

{

"query": "What was the net income for fiscal year 2025?",

"expected_page": 37, # Income Statements

"expected_answer_contains": ["101", "billion"],

},

{

"query": "What was Microsoft Cloud revenue in fiscal year 2025?",

"expected_page": 22, # Overview highlights section

"expected_answer_contains": ["168.9", "billion"],

},

{

"query": "Did Microsoft pay dividends in fiscal year 2025?",

"expected_page": 8, # Dividends table

"expected_answer_contains": ["3.32", "dividend"],

},

]

And the test for the retrieval:

# tests/test_retrieval.py

import pytest

from ingest import build_collection

from rag import retrieve

from tests.golden_dataset import GOLDEN_QA

PDF_PATH = "2025_AnnualReport.pdf"

# Chroma similarity can rank adjacent prose above the exact table row; keep k generous.

TOP_K = 12

@pytest.fixture(scope="module")

def collection():

return build_collection(PDF_PATH)

def test_retrieval_hit_rate(collection):

"""Aggregate metric: what fraction of golden queries retrieve the right page?"""

hits = 0

for item in GOLDEN_QA:

chunks = retrieve(collection, item["query"], top_k=TOP_K)

if item["expected_page"] in [c["page"] for c in chunks]:

hits += 1

hit_rate = hits / len(GOLDEN_QA)

print(f"\nRetrieval hit rate: {hit_rate:.0%} ({hits}/{len(GOLDEN_QA)})")

assert hit_rate >= 0.80, f"Retrieval hit rate {hit_rate:.0%} is below the 80% threshold"

The 80% threshold is the key line. It gives you a regression gate: if a change to your chunking strategy, embedding model, or vector store configuration degrades retrieval below that threshold, CI fails. You know before it reaches users.

Stage 3: End-to-End Answer Quality

LLM-as-judge is the pragmatic approach here. You don't need a separate expensive evaluation model - the same Claude API call that powers your pipeline can evaluate its own answers against expected criteria.

# tests/test_answers.py

from typing import Literal

import anthropic

import pytest

from pydantic import BaseModel, Field

from ingest import build_collection

from rag import rag_query

from tests.golden_dataset import GOLDEN_QA

PDF_PATH = "2025_AnnualReport.pdf"

client = anthropic.Anthropic()

JUDGE_MODEL = "claude-sonnet-4-6"

class JudgeEvaluation(BaseModel):

"""Schema for Claude JSON / structured output (output_format)."""

contains_expected_info: bool = Field(

description="Whether the answer includes the expected factual content."

)

is_hallucinated: bool = Field(

description="Whether the answer invents facts not grounded in the question/context."

)

confidence: Literal["high", "medium", "low"] = Field(

description="How confident the assessment is."

)

reason: str = Field(description="Brief explanation of the assessment.")

def llm_judge(query: str, answer: str, expected_keywords: list[str]) -> dict:

"""Use Claude structured outputs to evaluate answer quality."""

prompt = f"""You are evaluating a RAG system answer for factual correctness.

Question: {query}

Answer: {answer}

Expected to contain information about: {", ".join(expected_keywords)}

Fill the structured fields: whether the answer contains the expected information,

whether it appears hallucinated, your confidence level, and a short reason."""

response = client.messages.parse(

model=JUDGE_MODEL,

max_tokens=512,

temperature=0,

thinking={"type": "disabled"}, # disable extended thinking; not needed here

messages=[{"role": "user", "content": prompt}],

output_format=JudgeEvaluation,

)

parsed = response.parsed_output

if parsed is None:

raise RuntimeError(

"Structured judge output missing; check model support for output_format "

"and response content."

)

return parsed.model_dump()

@pytest.fixture(scope="module")

def collection():

return build_collection(PDF_PATH)

@pytest.mark.parametrize("item", GOLDEN_QA, ids=[q["query"][:40] for q in GOLDEN_QA])

def test_answer_quality(collection, item):

result = rag_query(collection, item["query"])

evaluation = llm_judge(

item["query"],

result["answer"],

item["expected_answer_contains"],

)

assert not evaluation["is_hallucinated"], (

f"Hallucination detected.\nQuery: {item['query']}\n"

f"Answer: {result['answer']}\nReason: {evaluation['reason']}"

)

assert evaluation["contains_expected_info"], (

f"Answer missing expected content.\nQuery: {item['query']}\n"

f"Answer: {result['answer']}\nExpected: {item['expected_answer_contains']}\n"

f"Reason: {evaluation['reason']}"

)

Run the full suite with:

pytest tests/ -v --tb=short

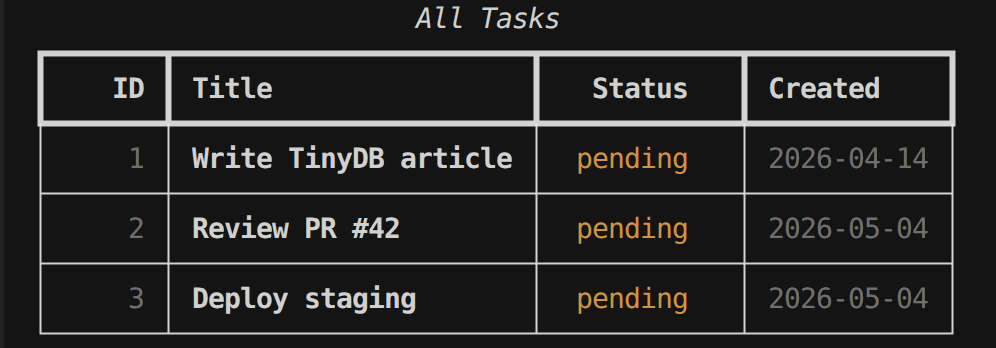

A representative run looks like this:

collected 10 items

tests/test_answers.py::test_answer_quality[What was Microsoft's total revenue in fi] PASSED [ 10%]

tests/test_answers.py::test_answer_quality[How many employees does Microsoft have?] FAILED [ 20%]

tests/test_answers.py::test_answer_quality[What was the net income for fiscal year ] PASSED [ 30%]

tests/test_answers.py::test_answer_quality[What was Microsoft Cloud revenue in fisc] PASSED [ 40%]

tests/test_answers.py::test_answer_quality[Did Microsoft pay dividends in fiscal ye] FAILED [ 50%]

tests/test_chunks.py::test_no_empty_chunks PASSED [ 60%]

tests/test_chunks.py::test_chunk_size_bounds PASSED [ 70%]

tests/test_chunks.py::test_no_orphaned_numbers PASSED [ 80%]

tests/test_chunks.py::test_chunk_overlap_preserves_context PASSED [ 90%]

tests/test_retrieval.py::test_retrieval_hit_rate PASSED

That failure is not a bug in the test. That is the test working. The headcount figure - 228,000 employees as of June 30, 2025 - sits in the middle of the Human Capital Resources section on page 16. The chunker split the paragraph across the page 15–16 boundary, and the retriever ranked adjacent chunks higher. You know this now, before a user discovers it in production.

The Monitoring Layer

Testing covers the queries you anticipated. Monitoring covers the queries you didn't.

In production, instrument every request with structured logging:

# monitoring.py

import time

import json

import logging

from rag import retrieve, answer

logger = logging.getLogger("rag.monitor")

def monitored_rag_query(collection, query: str) -> dict:

t0 = time.perf_counter()

chunks = retrieve(collection, query)

retrieval_time = time.perf_counter() - t0

# Flag low-confidence retrievals before they reach the model

top_score = chunks[0]["score"] if chunks else 0.0

low_confidence = top_score < 0.75

t1 = time.perf_counter()

response = answer(query, chunks)

generation_time = time.perf_counter() - t1

event = {

"query": query,

"retrieval_time_ms": round(retrieval_time * 1000, 1),

"generation_time_ms": round(generation_time * 1000, 1),

"top_retrieval_score": round(top_score, 4),

"low_confidence_retrieval": low_confidence,

"num_chunks_retrieved": len(chunks),

"retrieved_pages": [c["page"] for c in chunks],

}

logger.info(json.dumps(event))

if low_confidence:

logger.warning(f"Low-confidence retrieval for query: {query!r} (score={top_score:.3f})")

return {"query": query, "chunks": chunks, "answer": response, "meta": event}

Here is what the monitoring output looks like for the headcount query we already know fails:

INFO:httpx:HTTP Request: POST https://api.anthropic.com/v1/messages "HTTP/1.1 200 OK"

INFO:rag.monitor:{"query": "How many employees does Microsoft have?", "retrieval_time_ms": 244.1, "generation_time_ms": 1893.5, "top_retrieval_score": 0.1758, "low_confidence_retrieval": true, "num_chunks_retrieved": 3, "retrieved_pages": [81, 22, 75]}

WARNING:rag.monitor:Low-confidence retrieval for query: 'How many employees does Microsoft have?' (score=0.176)

The retriever returned pages 81, 22, and 75 - investor relations, the shareholder letter overview, and segment geographic data. Page 16, where the headcount figure lives, is not in the list. The top_retrieval_score of 0.176 is far below the 0.75 threshold, and the warning fires before the answer even reaches the model. In production, this log line is your early warning system.

The fields that matter most:

top_retrieval_score- the cosine similarity of the best-matching chunk. The 0.176 above is an extreme case; in practice, scores below 0.70 should be treated with suspicion. Track the distribution over time; a shift downward usually means document quality or query distribution has changed.low_confidence_retrieval- a boolean flag you can aggregate in your monitoring dashboard. If 15% of production queries are flagging this, you have a systematic retrieval problem, not random noise.retrieval_time_msvsgeneration_time_ms- when latency degrades, these tell you which layer is the bottleneck. Retrieval slowdowns point to index size or embedding model throughput. Generation slowdowns point to context length or model tier.

Monitoring a RAG pipeline is not fundamentally different from monitoring an API. You need latency percentiles, error rates, and a signal for "this request probably didn't go well". The only RAG-specific addition is retrieval score distribution - and that is one structured log field.

The Organizational Gap

But instrumentation alone doesn't close the loop. The failure mode that none of the code above addresses is not technical - evaluation is treated as a one-time task rather than a discipline.

A team ships the pipeline with a passing test suite. Three months later, the PDF is updated to reflect new financials. Nobody reruns the golden dataset against the new document. The chunk boundaries shift. The retrieval hit rate drops from 85% to 60%. Users notice the answers getting worse but attribute it to "AI being unreliable" rather than a specific regression with a specific cause and a specific fix.

This is not a technical failure. It is an organizational failure. Evaluation was never wired into the deployment process.

The fix is structural: treat RAG evaluation the same way you treat API contract testing. Every time the underlying document changes, every time the embedding model is updated, and every time the chunking parameters are tuned, the test suite runs. A score below threshold blocks the deployment.

That requires someone to own the golden dataset, keep it current, and expand it as new query patterns emerge in production logs. In most organizations, that role does not exist today. It needs to.

If no one on your team owns the golden dataset, no one owns the quality of your RAG system. That is not an AI problem. It is a product ownership problem.

What Good Looks Like: A Minimal Viable Test Stack

You do not need a dedicated MLOps platform to do this properly.

Start with the golden dataset: 50 hand-verified (query, expected page, expected content) pairs covering the most common query categories in your use case. If resources are tight, 20 is enough to get started; the dataset grows as production logs reveal new failure patterns. The investment is one senior engineer and one afternoon. Once it exists, wire the retrieval hit rate test into every pull request that touches chunking, embedding config, or document ingestion. A drop below threshold blocks merge. The test runs in under two minutes against a local ChromaDB instance.

Then instrument production. Log retrieval scores on every request and alert when the 7-day moving average of low_confidence_retrieval exceeds 10% - that's the signal that your document or query distribution has shifted. Every quarter, pull the top 20 low-confidence queries from those logs, verify the expected answers manually, and add them to the golden dataset. The test suite grows. Coverage improves. The system gets harder to break without anyone noticing.

That is the full stack. No specialized tooling required beyond what most engineering teams already have.

Conclusion: The Trust Problem

A RAG system that is not evaluated is not a production system. It is a demo with a deployment URL and a confidence problem.

The danger is not that it fails catastrophically. The danger is that it fails quietly, at scale, on exactly the queries that matter most to your users - the precise financial figures, the specific regulatory disclosures, the numbers a decision might actually rest on. And because there is no alarm, no exception, no dashboard that goes red, the failure is invisible until trust has already eroded.

The patterns in this article are not novel. They are the same patterns that mature engineering organizations apply to APIs, databases, and distributed systems: define what correct looks like, measure it continuously, and alert when it degrades.

RAG pipelines deserve the same discipline. The code above is a starting point. The golden dataset is the actual investment. Build it this week.

All code in this article is available in the companion GitHub repository: github.com/nunombispo/rag-pipeline-article. The repository includes a sample golden dataset, the full pytest suite, and a minimal monitoring wrapper.

Follow me on Twitter: https://twitter.com/DevAsService

Follow me on Instagram: https://www.instagram.com/devasservice/

Follow me on TikTok: https://www.tiktok.com/@devasservice

Follow me on YouTube: https://www.youtube.com/@DevAsService

Comments ()