How to Build an AI Chatbot for Q&A on Any Website

In today's digital era, the need for immediate access to information is more pressing than ever.

As users explore websites, they typically prefer to find swift answers to their questions without sifting through extensive content.

The answer to this lies in an advanced chatbot, designed to read a website's content and deliver accurate responses to user inquiries.

This article delves into the development of such a chatbot, with web scraping and conversational AI technologies.

Example of the ChatBot script with a question about https://developer-service.io/:

Human: what is this website about?

Loading answers...

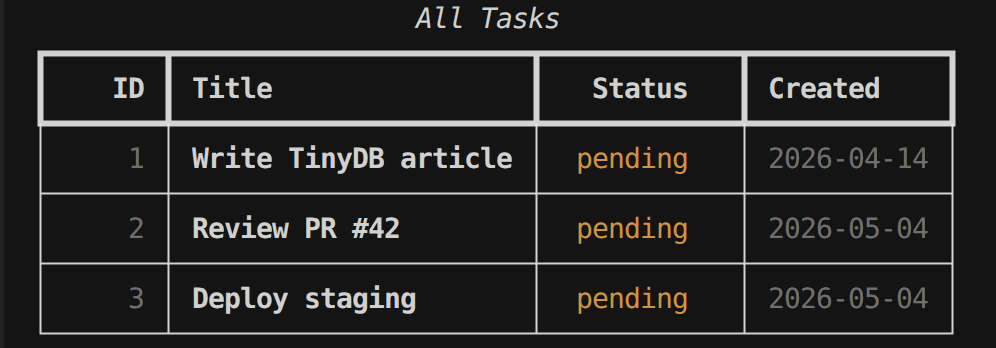

ChatBot: This website is about a content creation service that specializes in serving emerging businesses, particularly startups in the technology industry. The mission of this service is to equip these businesses with the necessary knowledge and perspectives they need to thrive in a competitive landscape. They offer tailored content that not only informs but also inspires action and drives results. Their team consists of experienced writers and tech enthusiasts who provide high-quality, customized articles that align with the client's vision, voice, and goals. They offer three content packages designed to support different stages of a startup's journey and provide SEO-optimized content with a typical turnaround time of 5-7 business days. They also help with content strategy, planning, and industry-specific insights.Example of interaction with the ChatBot

You can also check out the video version of the blog post:

Short Introduction to Website-Specific Chatbots

Website-specific chatbots are a significant advancement in improving online user experiences. In contrast to standard chatbots, these are customized to comprehend the unique content of a particular website, giving users information directly sourced from the site's pages.

They possess the ability to address queries, streamline navigation, and offer immediate assistance, rendering them indispensable tools for website owners striving to boost engagement and user satisfaction but can also be a tool used to allow users to understand the website content and navigate it without needing to read the entire content.

Get the eBook

Inside, you'll discover a plethora of Python secrets that will guide you through a journey of learning how to write cleaner, faster, and more Pythonic code. Whether it's mastering data structures, understanding the nuances of object-oriented programming, or uncovering Python's hidden features, this ebook has something for everyone.

Overview of the Technology Stack

The development of a chatbot capable of interpreting site content requires the use of various technologies:

Python: Serving as the foundational programming language, Python is celebrated for its ease of use and extensive library ecosystem.

LangChain and MistralAI: For the conversational AI element, we employ LangChain for its conversational retrieval chains and MistralAI for language embeddings and models.

FAISS: A library that facilitates efficient similarity search within large datasets, empowering the chatbot to locate the most pertinent content. Here we will use it with LangChain.

BeautifulSoup and Requests: These instruments are vital for web scraping, enabling us to systematically navigate and gather content from web pages.

Our project encompasses the following files:

search_links.py: Manages the search of links within the site.create_index.py: Tasked with indexing website content.chat_bot.py: Houses the logic governing the chatbot's interactions.main.py: The primary entry point for executing the chatbot application.

In the subsequent sections, we will delve into each file's content and logic details.

Please note that this project requires an API key for accessing the MistralAI platform. If you don't have an API Key, you can obtain one here (it requires a MistralAI account).

search_links.py: The Gateway to Web Content

The primary goal of search_links.py is to automate the discovery of navigable links on a website, creating a comprehensive list of URLs that a chatbot's indexing system can subsequently download and analyze. This process involves:

- Fetching web pages within the given domain.

- Parsing the HTML content to extract links.

- Recursively following those links to uncover more content, while avoiding duplicates and irrelevant links.

A key feature of search_links.py is its ability to discern which links are worth following. To maintain focus on valuable content, it filters out:

- Links leading to email addresses (

mailto:links). - Telephone links (

tel:links). - Direct links to image files (

.png,.jpg,.jpeg). - Links to OAuth endpoints.

- JavaScript links and anchors (

javascript:,#).

This selective process ensures that the script only pursues links that likely lead to substantive content relevant to the chatbot's knowledge base.

Let's take a closer look at the search_links.py code, which showcases the implementation of these concepts:

# Import the necessary libraries

from urllib.parse import urlparse, urljoin

import requests

from bs4 import BeautifulSoup

from langchain_community.document_loaders.async_html import AsyncHtmlLoader

from langchain_community.document_transformers.beautiful_soup_transformer import BeautifulSoupTransformer

from requests.auth import HTTPBasicAuth

# Get links from HTML

def get_links_from_html(html):

def get_link(el):

return el["href"]

return list(map(get_link, BeautifulSoup(html, features="html.parser").select("a[href]")))

# Find links

def find_links(domain, url, searched_links, username=None, password=None):

if ((not (url in searched_links)) and (not url.startswith("mailto:")) and (not url.startswith("tel:"))

and (not ("javascript:" in url)) and (not url.endswith(".png")) and (not url.endswith(".jpg"))

and (not url.endswith(".jpeg")) and ('/oauth/' not in url)

and ('/#' not in url) and (urlparse(url).netloc == domain)):

try:

if username and password:

requestObj = requests.get(url, auth=HTTPBasicAuth(username, password))

else:

requestObj = requests.get(url)

searched_links.append(url)

print(url)

links = get_links_from_html(requestObj.text)

for link in links:

find_links(domain, urljoin(url, link), searched_links, username, password)

except Exception as e:

print(e)

# Search links

def search_links(domain, url, username=None, password=None):

# Define the list of searched links

searched_links = []

# Find the links

find_links(domain, url, searched_links)

# Return the searched links

return searched_links

Here's a breakdown of the code:

- The necessary libraries are imported

- The

get_links_from_htmlfunction is defined to extract links from an HTML document. It uses BeautifulSoup to parse the HTML and select all the anchor tags with anhrefattribute. Theget_linkfunction is a helper function that extracts thehrefvalue from each anchor tag. - The

find_linksfunction is a recursive function that takes a domain, a URL, a list of searched links, and an optional username and password for HTTP Basic Authentication.- It checks if the URL meets certain conditions (e.g., it hasn't been searched before, belongs to the specified domain, it's not a mailto or tel link, etc.).

- If the URL meets these conditions, the function sends an HTTP GET request to the URL, adds the URL to the list of searched links, and prints the URL.

- It then extracts all the links from the HTML content of the URL and recursively calls itself for each extracted link.

- The

search_linksfunction is defined to initiate the link-searching process. It takes a domain, a URL, and an optional username and password for HTTP Basic Authentication.- It initializes an empty list of searched links and calls the

find_linksfunction with the provided domain, URL, and authentication details. It then returns the list of searched links.

- It initializes an empty list of searched links and calls the

create_index.py: The Heart of Content Comprehension

This script encapsulates the process of indexing website content, transforming it from mere text into a structured, searchable format.

At its core, create_index.py is responsible for:

- Fetching website content, leveraging the list of URLs provided by

search_links.py. - Parsing and cleaning the fetched content to extract useful text, removing HTML tags, scripts, and other non-essential elements.

- Processing the text through NLP techniques to understand its semantic meaning.

- Creating embeddings for the text, which are high-dimensional vectors representing the content in a way that captures its context and nuances.

- Indexing these embeddings in a searchable database, allows the chatbot to perform semantic searches against the indexed content.

Let's take a look at the code for create_index.py that captures the essence of the tasks described above: